The U.S. Treasury Department's Office of Foreign Assets Control sanctioned Operation Zero last week, a Russian company that brokers zero-day vulnerabilities to government buyers. The sanction wasn't issued because Operation Zero discovered these exploits. It was issued because an Australian national, working for a U.S. defense contractor, Peter Williams, stole eight proprietary cyber tools, built specifically for exclusive use by the U.S. government and select allies, and sold them directly to the broker.

That’s eight tools created to protect U.S. national security infrastructure that are now, by Treasury's own account, in the hands of state-level actors with the resources and intent to use them.

This is a story about what happens when your entire security model assumes the person sitting at the desk is trustworthy.

🛡️ Is Your Internal Trust Your Greatest Vulnerability?

Most defense contractors focus on keeping hackers out, but 2026's biggest threat is the credentialed insider. If an employee downloaded your most sensitive technical data today, would it be plaintext or protected?

The Insider Threat That Policy Cannot Solve

Williams was cleared as he had authorized access. He sat inside the perimeter, which means every network-layer control, every intrusion detection system, every access log designed to stop external attackers was, by design, not watching him.

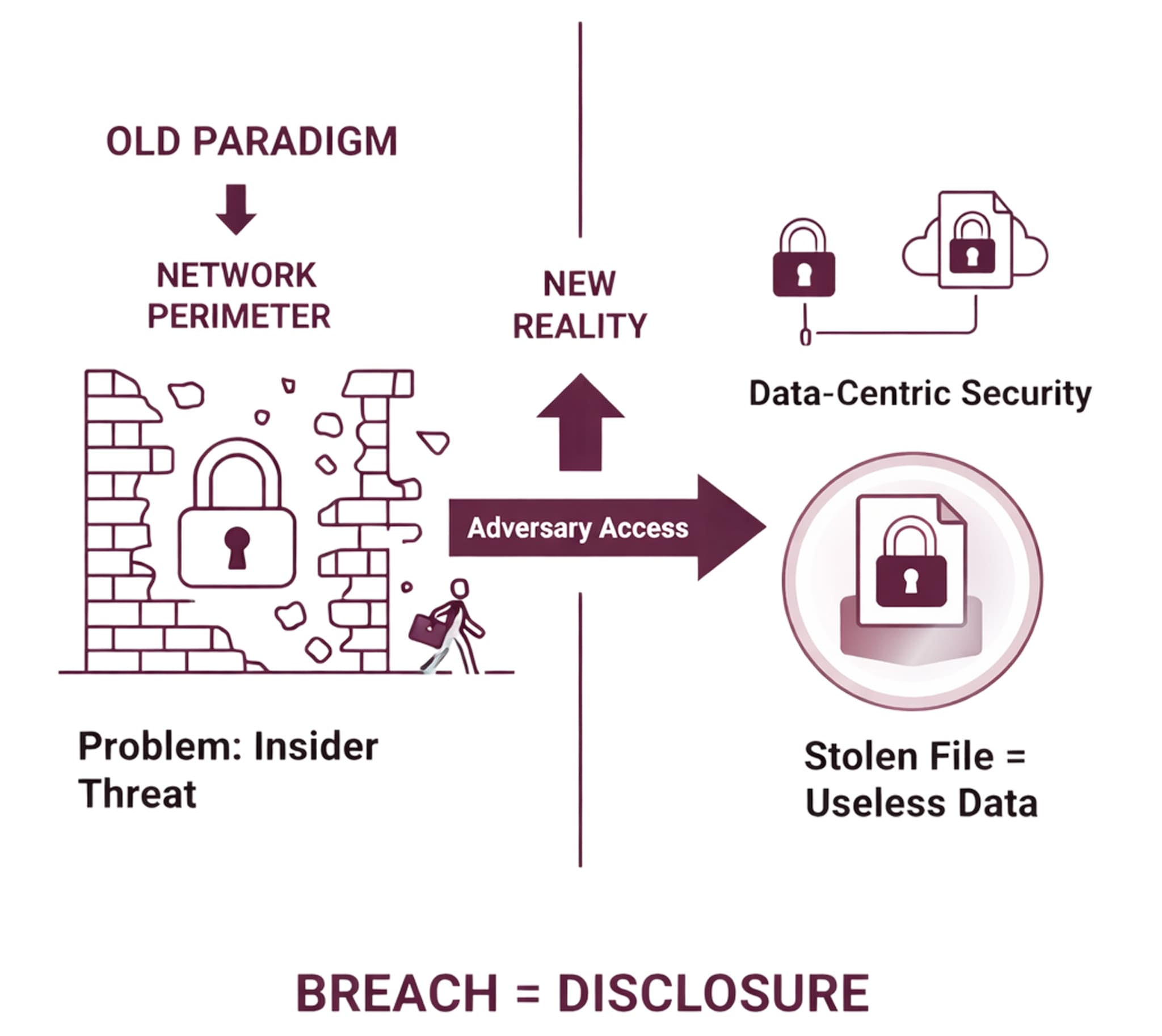

That's the structural problem with perimeter security. It is built on a binary: inside the network is trusted, outside is not. The moment a cleared insider decides to become an adversary, that entire model collapses, not partially, entirely.

The implication is more disturbing than the headline suggests. These weren't generic exploits purchased from a dark web forum. These were tools engineered for U.S. government operations, which means whoever now holds them understands exactly what they were built to target and how the systems they're designed to defeat actually work.

The adversary didn't break in; they bought the keys.

Cleared Networks Are Not Safe Networks

The defense industrial base operates under the assumption that security clearances and access controls create a meaningful security boundary. CMMC, ITAR, and EAR compliance all reinforce this assumption; they define who can access what, and audit whether those definitions are being followed. They do not, by design, answer the question of what happens to the data once a cleared person decides to take it.

The Williams case demonstrates what insider threat researchers have argued for years: logical access controls tell you who opened the file. They do not prevent that person from copying it, exfiltrating it, or selling it. The proprietary exploit code that left that defense contractor's network didn't require a breach; it required a USB drive and a motivated employee.

Once those files left the building, they were readable by anyone who received them. The Russian broker didn't need to decrypt anything. The files were already in plaintext because the security posture that protected them ended at the network edge.

What Happens When The Data Protects Itself

The core failure in the Operation Zero case is not that a cleared insider turned. Insider threats are a permanent feature of any organization with human employees. The failure is that when he did turn, the files he carried out were immediately useful to an adversary.

Data-centric security inverts this. Rather than asking "who is allowed to access this network," it asks "what should this file be allowed to do, and with whom, regardless of where it travels."

Under a file-follows-protection model where encryption and access policy are bound to the document itself, not to the network hosting it, those eight stolen exploit tools would have been useless the moment they left the authorized environment. The file would still be encrypted. The policy controls which verify device identity, user credentials, geolocation, and contextual access conditions on every open would have refused to execute outside the authorized boundary. The Russian broker would have received eight files that it couldn't read.

This is the operational difference between protecting a perimeter and protecting the data.

Beyond the Perimeter: Why Data-Centric Security is the New Standard for CMMC & ITAR

The Treasury can sanction a broker, but it cannot unsell those exploits. The intelligence value those eight tools carry is already transferred.

The question that matters for every defense subcontractor holding CUI, ITAR-controlled technical data, or proprietary government deliverables is straightforward: if your most sensitive files were copied by a cleared employee today, what would an adversary be able to do with them tomorrow?

If the answer is "read them immediately," the perimeter has already failed. The security model needs to change.

🔒 "Inside the Network" is Not the Same as Being Secure.

Ensure your files are only readable by verified identities, even if they are copied, moved, or stolen. Deploy in minutes.