Your DLP is running. Your firewall is up. Your sensitivity labels are applied. And right now, a project manager on your team is uploading a confidential contract to an AI assistant your security team has never evaluated.

That upload doesn’t trigger a single alert.

This is Shadow AI, and it’s not a future risk. According to the ISMS.online State of Information Security Report 2025, 37% of employees have already used GenAI tools without organizational permission. Gartner’s latest research puts a number to the acceleration: the volume of sensitive data being sent to AI tools has increased 6x in the past year alone.

The governance response hasn’t come close to keeping pace. Only 25% of organizations have fully implemented AI governance programs. Just 7% have embedded governance into their operations, despite 93% using AI in some capacity. The gap between adoption and controls is not a gap. It’s a canyon.

This is what happens when security architecture built for one era runs headlong into a threat model it was never designed to address.

🛡️ Download the Shadow AI Risk Assessment Checklist

Are your employees making unauthorized data decisions? Use our checklist to identify hidden AI pipelines and assess your current exfiltration risk.

Defining the Shadow AI Threat: Unauthorized Decisions, Not Just Unauthorized Tools

Shadow AI is the unauthorized use of AI tools, applications, and systems within an organization without the knowledge or approval of the IT or security team. IBM, Forcepoint, and Wiz all define it along similar lines, but the operational reality is messier than any definition captures.

Shadow IT, its predecessor, was about unauthorized systems: cloud storage accounts, collaboration tools, and personal devices on the corporate network. Shadow AI is about unauthorized decisions. When an employee pastes a contract into ChatGPT, they aren’t just using an unapproved tool. They are making a data governance decision, one that sends sensitive information to a third-party model provider’s infrastructure, potentially contributing to training data, and bypassing every control your compliance program has established.

There are two distinct forms:

- Employee-driven Shadow AI is the most visible. Employees using free or consumer-grade AI tools — Gemini, ChatGPT, Grammarly, Notion AI, browser-based summarizers — without going through IT approval. In most cases, they don’t know they should. They are doing their jobs, and AI helps them do those jobs faster.

- Agent-driven Shadow AI is the less-visible and higher-risk form. AI agents — Copilot, Glean, and similar tools integrated with enterprise infrastructure — that have been granted broad OAuth access to SharePoint, Google Drive, or cloud storage. These agents access everything the user has permissions to, which is often far more than intended, and sensitive data surfaces in AI training and responses before anyone realizes it happened.

Both forms share the same root problem: data leaving your governance perimeter through channels your security stack was never built to monitor.

The Unstructured Data Trap: Where Shadow AI Exfiltrates CUI

Here is the dimension that makes Shadow AI uniquely dangerous.

Between 70% and 90% of organizational data is unstructured, including documents, spreadsheets, presentations, emails, and technical specifications. This is precisely the data employees feed to AI tools. It is also precisely the data AI agents crawl when they index your repositories.

DLP was built to monitor structured data flows: file transfers, email attachments, and clipboard events. It was not built for this attack surface. A document pasted into a browser-based AI interface is not a file transfer. An AI agent reading your SharePoint is not an email. Neither event registers as an exfiltration event. Both result in your most sensitive data reaching systems you don’t control.

The IBM Cost of a Data Breach Report 2025 quantifies the cost of this exposure: 97% of organizations that suffered AI-related breaches lacked proper access controls. And according to Vanta’s State of Trust Report 2025, 59% of security leaders say AI-related threats now outpace their expertise and ability to manage them.

That last number is the most important. This is not a gap that additional policy will close. It requires a different architectural approach.

Why Traditional Security Stacks Fail at Shadow AI Data Governance

The traditional security stack was designed for a world where humans accessed data through approved channels; AI has shattered that model.

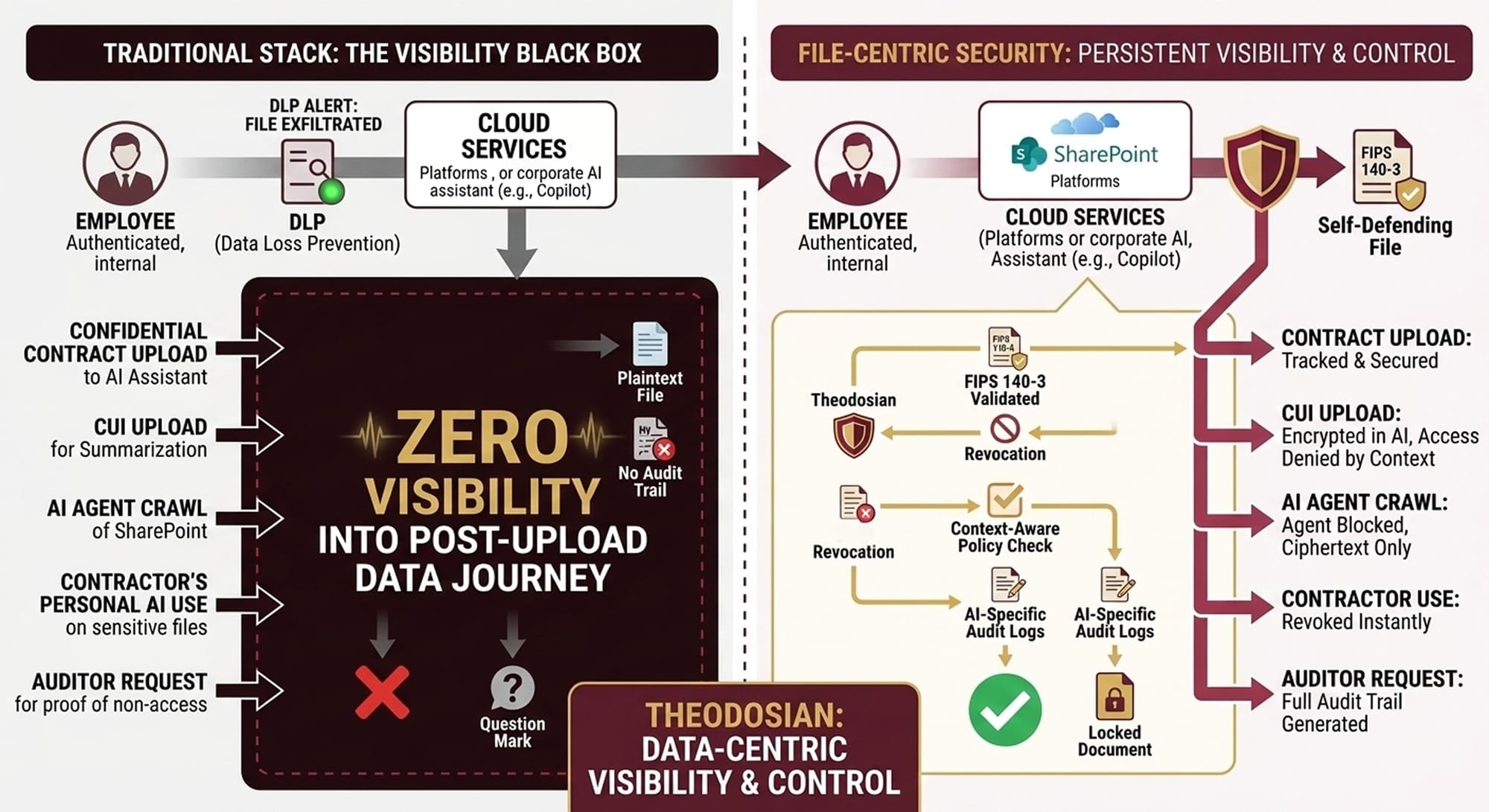

Consider what actually happens in each of these scenarios without file-level controls:

An employee uploads a confidential contract to an AI assistant. The file leaves your environment in plaintext. DLP may alert after the fact, but the data is already exposed to the AI provider, potentially ingested into a training pipeline you have no visibility into or control over.

An employee uploads CUI into an AI tool for summarization. Data is sent to a third-party AI provider. You have zero visibility into how that data is used, stored, or reused. Under CMMC 2.0, this constitutes a control failure. Under ITAR, it may constitute an unauthorized export.

An AI agent crawls your SharePoint for answers. The agent accesses everything the user has permissions to, often far more than intended. Sensitive data surfaces in AI-generated responses before any security team member has seen it.

A contractor uses a personal AI tool on your sensitive files. No visibility at all. The contractor’s AI tool may store, train on, or expose your data. You may never know it happened.

An auditor asks you to prove AI hasn’t accessed regulated data. You can’t. Most DLP tools don’t log AI-specific access patterns. Evidence gaps jeopardize your compliance posture at exactly the moment it matters most.

The structural failure is consistent across all five: perimeter controls and network-layer monitoring assume the threat is coming from outside. Shadow AI exploits authorized users making unauthorized data decisions, from inside your authenticated user base, on approved devices, during working hours.

Act One: Block Unauthorized AI at the Data Layer

Closing the Shadow AI gap requires moving the point of security from the network to the data itself. When encryption and access controls are applied at the file level — persistent, policy-bound, and enforced regardless of where the data travels — it doesn’t matter whether a file ends up in an authorized system or an employee’s personal AI account. The data cannot be used without authorization.

This is what data-centric security means in practice, and it addresses Shadow AI in four specific ways:

- Per-file encryption that defeats AI scraping. Every file is individually encrypted. Even if an AI agent gains access to your cloud storage, it sees only ciphertext. There is no master key and no backdoor. FIPS 140-3 validated AES-256 encryption ensures the standard meets federal requirements for CUI and ITAR-controlled data.

- Context-aware policies that block unauthorized AI. Define policies that detect and block AI-specific access patterns: unauthorized applications, bulk file reads, and API-based access from unapproved services. Access is denied in real time, before the file is ever opened, not flagged after the fact.

- Automatic containment for anomalous AI behavior. Unusual patterns, rapid bulk access, off-hours file crawling, and unexpected API calls trigger automatic access freezes. The alert your team receives says “access denied,” not “data stolen.”

- AI-specific audit trails for compliance. Every access attempt, both failed and successful, is logged with full context: who or what (human or AI), which file, when, from where, which application, and whether access was granted or denied. When an auditor asks you to prove AI hasn’t accessed regulated data, you can answer the question.

Act Two: Enable the AI Your Organization Needs

Blocking all AI access is not a security strategy; your teams need AI to stay productive. Your organization needs AI to stay competitive. The question is not whether to use AI, it’s how to use it without losing control of your data. And the organizations that answer that question well will move faster than those that don’t, while maintaining the compliance posture that defense contracts, regulated data, and enterprise customers require.

Effective AI governance does not mean blocking everything and hoping for the best. It means building a governed access model where trusted AI systems can work with the data they need, under precisely the conditions you specify, with full audit visibility.

In practice, this means treating AI systems as non-human identities, the same framework you apply to human users. You register your approved AI provider (whether a cloud API, on-premises model, or enterprise copilot) as a recognized identity in your security architecture. You define exactly which files, folders, or data classifications that identity can access. You layer conditions: business hours only, approved infrastructure only, specific use cases only. Everything outside that policy scope remains encrypted and inaccessible.

The governance frameworks now entering enforcement — NIST AI RMF, ISO/IEC 42001, and the EU AI Act — all require documented, auditable controls over AI data access. For defense contractors operating under CMMC 2.0 and ITAR, the stakes are higher: unauthorized AI access to CUI or ITAR-controlled data is a contract-loss risk and potential regulatory offense.

A data-centric architecture that treats AI as a governed identity class satisfies these frameworks at the technical level, not just the policy level. That distinction between what you say you do and what you can prove is exactly what regulators and auditors are now demanding.

The Governance Reality Check: 93% Using AI, 7% Governing It

Only 25% of organizations have fully implemented AI governance programs. Only 7% have embedded those programs into their operations, despite 93% using AI in some capacity. And 54% of organizations admit they adopted AI too quickly and are now struggling to implement it responsibly.

The organizations that close this gap first will not just reduce their breach exposure. They will carry a structural advantage in procurement, contract retention, and regulatory readiness.

The deployment reality: AI data governance that operates at the file level can be operational in days rather than months. No data migration. No workflow disruption. Protection is applied where your data already lives.

🛡️ Ready to Move from Policy to Enforcement?

A 93% adoption rate shouldn't mean 100% exposure. Most security solutions tell you what happened after the data left. We show you how to stop it in real-time.

FAQs: Shadow AI Data Governance

Can Zero Trust architecture prevent Shadow AI data leakage?

Partially. Zero Trust Architecture governs who can access systems and networks. It does not govern what authorized users do with data once they have accessed it, including whether they share it with an external AI tool. Data-centric security controls, where protection travels with the data itself, are required to close this gap. Zero Trust and data-centric security are complementary, not competing, approaches.

Can I control which AI tools access my cloud storage?

Yes, with file-level access controls. When files are individually encrypted with persistent access policies, AI agents and copilots that attempt to read protected files must satisfy the same context-aware policies as human users.

What’s the difference between AI governance platforms and data-centric security?

AI governance platforms manage the AI model lifecycle, bias monitoring, and compliance documentation. They ensure your AI systems behave responsibly. Data-centric security addresses a different but related problem: ensuring your sensitive data cannot be accessed by unauthorized AI in the first place. Think of data-centric security as the enforcement layer beneath your governance program. The governance framework defines the policy. The data layer enforces it.

What frameworks apply to AI data governance?

The NIST AI Risk Management Framework (AI RMF), ISO/IEC 42001, and the EU AI Act all require documented control over how AI systems access data. For defense contractors, CMMC 2.0 and ITAR impose strict controls on CUI and controlled technical data; unauthorized AI access to these categories is a compliance failure regardless of whether it was intentional.

How do we prove to auditors that AI hasn’t accessed regulated data?

AI-specific audit trails that log every access attempt (both granted and denied) with full context, identity, application, device, location, timestamp, and policy outcome give you the evidence that auditors and regulators are increasingly demanding. The key phrase is “access denied,” not “we don’t believe the data was accessed."

Can we start by blocking all AI and enabling trusted AI access later?

Yes, and for many organizations, that is the right starting approach. Applying file-level encryption and blocking all AI access provides immediate protection against Shadow AI exposure. When your organization is ready to adopt specific trusted AI tools, you update your access policies to authorize those providers for specific data sets. The enforcement infrastructure is already in place.