In mid-2025, security researchers disclosed CVE-2025-32711 — a critical vulnerability in Microsoft Copilot, dubbed EchoLeak. The attack used indirect prompt injection delivered via email: a hostile instruction embedded in a message that Copilot was processing, triggering the agent to exfiltrate sensitive data from the user's environment. No user interaction, no alert, no DLP event, and no CASB trigger.

The agent was authenticated. The data governance framework assumed a human was in the decision loop. It wasn't.

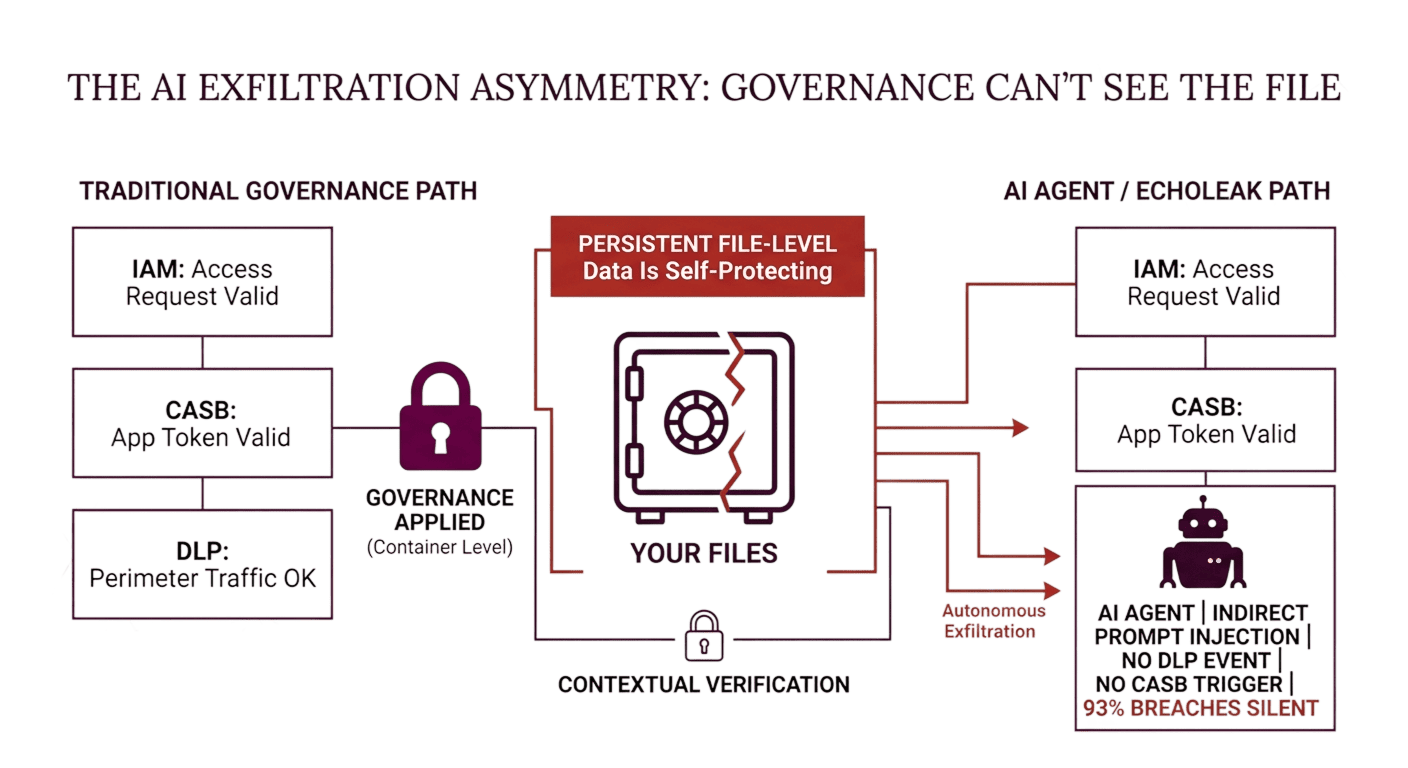

EchoLeak is not a Copilot problem. It is a structural problem with how data governance frameworks were designed, and what they were never designed to handle. Every major governance architecture in enterprise security today was built for a world where data moves because a human decides to move it. AI agents eliminate that assumption. They act autonomously, at scale, with legitimate credentials, in environments that existing tooling was built to protect.

The gap is not in detection capability. The gap is in what the existing tools were designed to protect against, and what they structurally cannot see.

🛡️ Is Your File Governance Built for AI-Driven Environments?

Agentic AI, Shadow AI tools, and indirect prompt injection create exfiltration pathways that DLP and CASB weren't designed to catch. Use this checklist to identify where your current controls have blind spots.

Why Do AI Agents Break Existing Governance Models?

The three tools that form the backbone of enterprise data governance — DLP, CASB, and IAM — share a common architectural dependency: they assume a human actor operating in a managed network environment.

DLP (Data Loss Prevention): Inspects network traffic, email content, and endpoint activity for policy violations. It identifies sensitive content moving through monitored channels, and it blocks or alerts you. What it cannot see: browser-based input (typing or pasting content into a web application), API calls made by integrated AI agents to internal file stores, or data transmitted through authenticated agent sessions that the DLP engine treats as authorized user activity. EchoLeak generated no DLP event because the data movement happened inside an authenticated session through an application's own API — exactly the kind of traffic DLP is configured to trust.

CASB (Cloud Access Security Broker): Validates OAuth tokens and monitors cloud application access. It can flag unusual login patterns, block access from non-compliant devices, and enforce application usage policies. What it cannot see: what an authenticated agent does inside a permitted application. CASB validates the access event, not the content of the agent's actions once access is granted.

IAM (Identity and Access Management): Controls who can authenticate to what system. It is the foundation of Zero Trust access control. What it cannot see is the difference between an authorized user accessing files for legitimate purposes and an AI agent accessing the same files under the user's credentials to fulfill an injected instruction.

All three tools are functioning correctly in an EchoLeak scenario. None of them are designed to catch what happened. This is not a configuration failure; it’s a scope limitation — and it applies to every governance architecture built on the assumption that data moves because a human with appropriate permissions decided to move it.

The Three AI Exfiltration Pathways Most Frameworks Miss

Pathway 1: Shadow AI — Visible Exfiltration, Invisible to DLP

43% of employees regularly use AI tools that haven't been approved by their organization's IT or security team. In most cases, this means pasting file contents — design specifications, contract terms, CUI summaries, proposal pricing — into a browser-based AI tool to get a formatted output or answer.

DLP does not inspect browser input fields by default. The content leaves the organizational boundary as typed or pasted text — not as a file transfer, not as an email attachment, not as a monitored download. No alert fires, no audit log entry exists in any system the organization controls. From a governance perspective, that data never left.

Pathway 2: Agentic Workflow Integration — Legitimate Access, No Governance on Exit

Enterprise AI integrations — Microsoft 365 Copilot, Salesforce Einstein, ServiceNow AI agents — operate with broad permissions granted during deployment. An agent configured to "help the sales team access relevant documents" may have read access to the entire SharePoint environment, not just the documents relevant to a specific task.

When an agent fulfils a request by retrieving and summarizing documents, it is performing an authorized action through authorized credentials. The governance question — should this specific document be accessible to this agent, for this purpose, under this instruction? — is not one that IAM, CASB, or DLP is positioned to answer. Access was granted at deployment time. Individual agent interactions are not evaluated against content-level policy.

Pathway 3: Indirect Prompt Injection — No User Involvement Required

Indirect prompt injection is the attack class that EchoLeak demonstrated at enterprise scale. A hostile instruction embedded in data the agent processes — an email, a document, a calendar entry, a webpage the agent has been asked to summarize — redirects the agent's behavior without the user's knowledge or involvement.

The NIST AI Risk Management Framework, updated in 2025 to include specific profiles for agentic AI systems, identifies indirect prompt injection as the most critical vulnerability class in autonomous agent architectures. The attack surface is not the agent's code. It is the data the agent reads, which means every document in a governed repository is a potential attack vector if an AI agent can be directed to access it.

What "Data Lifecycle Management" Misses In an AI Context

Data lifecycle management frameworks — classification at creation, access controls through active use, archival and disposal at end of life — are built on a predictable model: data moves through defined stages, in defined systems, under human direction.

AI agents break all three assumptions.

Data moves when the agent decides to move it, not when a human actor initiates a transfer. The stages are not predictable: an agent fulfilling a legitimate request may touch data across multiple lifecycle stages in a single session. And the direction is not human: an agent operating under an injected instruction is executing against a policy set by the attacker, not the organization.

The Cisco 2026 Data and Privacy Benchmark Study found that 93% of organizations have expanded their privacy programs because of AI, and 93% plan to allocate more resources to data governance in response to AI complexity. The investment is real. The challenge is that most of it is being directed at traditional DLM extensions — better classification, tighter CASB policies, more granular IAM rules — rather than the structural gap those tools cannot close.

You cannot solve an AI-driven exfiltration problem by configuring tools that were designed for human-driven exfiltration.

The Policy-Follows-the-File Principle

The governance frameworks that fail against AI agents share a common structural characteristic: the policy lives in the network, the application, or the access control layer, not in the data itself.

When an AI agent operates within a governed network on a permitted application using valid credentials, it is inside every control these frameworks enforce. The data it accesses is, from the perspective of DLP, CASB, and IAM, being accessed legitimately. The governance boundary is the system. The agent is inside the system.

The only architecture that holds against this threat model is one where the governance boundary is the file, where the policy is embedded in the object itself, not the environment in which the object lives.

This is what persistent file-level governance requires: every governed file carries its access policy as part of its structure. When an AI agent, an automated workflow, or an indirect prompt injection attempts to open or transmit the file, the file's own policy is evaluated against identity, device, location, network, time, and behavioral context — before access is granted.

The file doesn't know whether it's being opened by a human or an agent, and it doesn't need to. The contextual access check evaluates the access attempt against the policy regardless of who — or what — is attempting it. An agent operating with a user's credentials in an unexpected context will fail the contextual check on a governed file just as a human attacker using stolen credentials would. Access denied. No data leaves.

How File-Level Controls Address the AI Governance Gap

Data-centric security applies FIPS 140-3 validated per-file encryption with contextual access controls that evaluate every open attempt in real time. For AI governance specifically, three capabilities matter:

Contextual Evaluation Independent of Actor Type: The access control check doesn't distinguish between a human user and an AI agent making an API call. It evaluates the context of the access attempt: is the identity authorized, is the device compliant, is the location and network within policy, is the time within permitted parameters, does the access pattern match expected behavior? An agent operating under an injected instruction will typically fail on behavioral signals — access volume, file scope, or access timing that deviates from the authorized user's baseline — and the access attempt is denied at the file layer.

Behavioral Anomaly Detection With Autonomous Response: When access patterns deviate from baseline — bulk file access across repositories, access to sensitive files outside the user's authorization scope, or access initiated from unusual system contexts — anomalous behavior triggers an autonomous response. Access is suspended, and the security team is alerted. No human intervention is required before the response fires. This is the capability that catches AI-driven exfiltration that passes every authentication and authorization check: the files know they're being accessed abnormally, even if the access credentials are valid.

Governance That Persists Outside the Network Boundary: For files that have already left the network — through legitimate contractor sharing, authorized downloads, or previous workflows — persistent contextual access controls continue to evaluate every open attempt. A file shared with an external contractor three months ago is subject to the same policy evaluation today as it was at the moment of sharing. If that contractor's environment is now hosting an AI agent with injected instructions, the file's access policy is still enforced. The governance didn't stop at the network boundary — it never had one.

🔎 Scope Your AI Governance Gap In 14 Days

Implement persistent contextual controls across your entire infrastructure.

FAQ: AI Data Governance and Persistent File-Level Controls

How do AI agents exfiltrate data without triggering DLP alerts?

DLP inspects monitored channels — email, network traffic, endpoint file transfers. AI agents operating inside authenticated sessions via application APIs move data through channels that DLP treats as authorized activity. Browser-based AI tools receive data through input fields that most DLP configurations don't inspect. Indirect prompt injection redirects authenticated agent behavior without creating the kind of traffic signature DLP is configured to flag. The exfiltration happens inside the authorized perimeter, through authorized channels, using authorized credentials — all of which DLP was designed to trust.

Why doesn't CASB stop AI-driven data governance failures?

CASB validates access events — OAuth token legitimacy, application usage policies, and access from compliant devices. Once an agent is inside a permitted application using a valid token from a compliant device, CASB's role is complete. What the agent does inside that application — which files it accesses, what it transmits via the application's own APIs — is outside CASB's enforcement scope. CASB controls the gate; it doesn't govern what happens after the gate opens.

What is indirect prompt injection, and why is it a governance risk?

Indirect prompt injection is an attack technique where hostile instructions are embedded in data that an AI agent processes — emails, documents, web pages, calendar entries. The agent reads the data as part of a legitimate task and executes the injected instruction, believing it to be a valid directive. The user is not involved in the decision. NIST's 2025 AI Risk Management Framework identifies indirect prompt injection as the most critical vulnerability class in agentic AI architectures. For organizations where AI agents have access to file repositories, every document the agent can read is a potential injection vector.

What is the difference between data governance and data security in an AI context?

Traditional data governance frameworks define policies for how data should be handled — classification, retention, access control, and lifecycle stages. Data security implements technical controls to enforce those policies. In an AI context, the gap between governance (the policy) and security (the enforcement) widens significantly: governance frameworks assume predictable, human-directed data movement, while AI agents create unpredictable, autonomous movement that existing security controls weren't designed to intercept. Persistent file-level controls close that gap by making the enforcement mechanism the file itself — rather than the network or application environment where the policy previously lived.

How does persistent file-level governance handle authorized AI agent access?

For AI agents that require legitimate access to governed files — internal workflows, authorized integrations, sanctioned automation — the access policy can be configured to accommodate agent use cases while maintaining governance. Contextual access controls can include agent identity as an authorization parameter, time-bounded access windows for automated workflows, and scope restrictions that limit which files an agent can access for which purposes. The governance doesn't block AI use — it makes AI access auditable, bounded, and revocable at the file level, rather than governed only at the application or token level.