The AI Governance Question Your Board is Already Asking

It's Q2 2026. Your board has seen the headlines: EU AI Act enforcement ramping up, NIST releasing its AI Risk Management Framework, and every peer organization deploying AI governance tools. Your CISO is being asked: "Which platform do we buy?"

The question itself contains a trap.

Most AI governance platforms answer a single question: What is the model doing? They audit model behavior, flag bias, track training data provenance, log model decisions, and map model outputs to compliance frameworks. These are the right questions to ask. But they're not the only questions that matter.

The question they don't answer is: What happens to the sensitive data feeding the model?

That's the gap this guide addresses.

Two Layers of AI Governance You Need to Understand

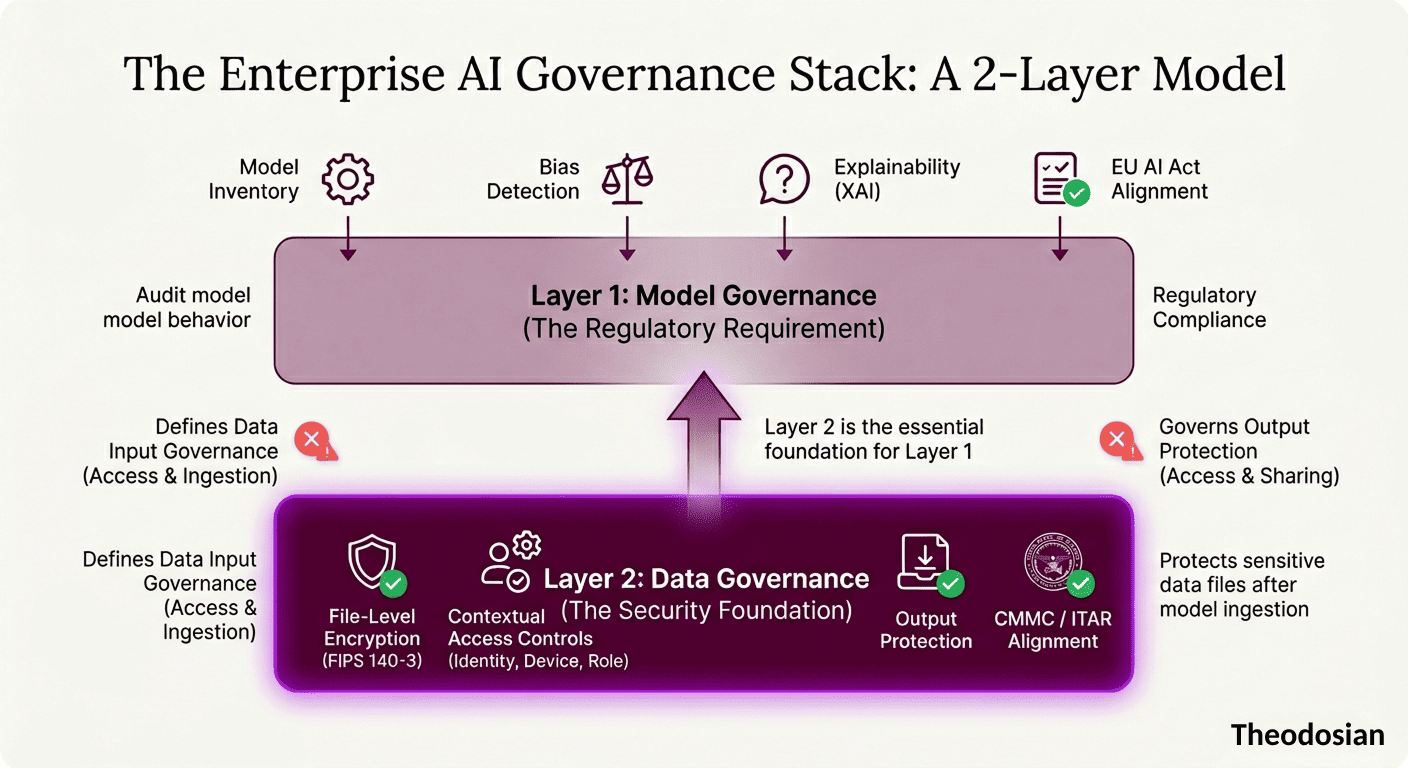

Most enterprises treat AI governance as a single problem. It's actually two:

Layer 1: Model Governance

What does the AI system do? Is it accurate? Fair? Transparent? Can we audit its training data? Can we explain its outputs? Layer 1 answers control questions about the model itself—its behavior, bias, transparency, and compliance with regulations like the EU AI Act.

Layer 2: Data Governance

What sensitive data is flowing into the model? Who can access model outputs that contain sensitive information? If the model processes CUI, ITAR-controlled files, PII, or proprietary designs, how is that data protected before ingestion and after generation? Layer 2 answers control questions about the data itself—its access, protection, and lifecycle within AI workflows.

Most AI governance platforms excel at Layer 1. Almost none address Layer 2.

This distinction matters because your compliance obligation is two-fold:

- Govern the AI system itself (regulatory requirement).

- Protect the sensitive data the AI system touches (security requirement).

You need both.

How We Evaluated These Governance Platforms

We reviewed five enterprise AI governance and data protection platforms against five evaluation criteria:

- Model Governance Coverage — Breadth of model behavior, bias, transparency, and audit capabilities.

- Data Input Governance — Visibility and controls over what sensitive data enters AI pipelines.

- Output Protection — Controls over access to AI-generated outputs that contain sensitive information.

- Compliance Framework Alignment — Explicit mapping to EU AI Act, NIST AI RMF, and industry-specific standards.

- Audit Trail Generation — Completeness of logs for compliance and incident investigation.

The governance platforms reviewed:

- Microsoft Purview

- IBM OpenPages

- OneTrust

- ServiceNow GRC

- Theodosian

Each brings different strengths to different parts of the AI governance challenge.

Microsoft Purview: The Ecosystem Play

What It Governs Well: Purview maps directly into organizations already running Microsoft 365. The AI Hub provides governance for Copilot outputs within Purview's compliance ecosystem. Sensitivity labels extend to AI-generated content, and audit trails feed directly into Microsoft's compliance manager.

Compliance Framework Alignment: Strong alignment with EU AI Act governance requirements. Audit trails capture model usage, data input classifications, and output sensitivity labeling. Integrates directly with Microsoft Compliance Manager for regulatory mapping.

Where It Stops: Purview governs Copilot behavior—what the model does within Microsoft's ecosystem. It tracks what sensitivity label is on the output. But it doesn't govern what happens when a user tries to share that Copilot output with someone who shouldn't see it, or when that output is exported out of Purview and lands on someone's laptop as an unencrypted file.

Purview also requires that the sensitive data flowing into Copilot be pre-classified within Microsoft 365. If your sensitive files live in SharePoint and they're labeled correctly, Purview sees them. If they're external files, on-premises data, or in non-Microsoft systems, Purview's visibility stops.

Best For: Organizations with heavy Microsoft 365 adoption, where Copilot is the primary AI consumption point.

IBM OpenPages: The Regulated Finance Play

What It Governs Well: OpenPages is built for regulated financial services. Its AI governance module sits within a broader GRC platform, so it connects model governance to risk management, audit workflows, and model inventory. Strong model risk assessment capabilities; captures model lineage, training data provenance, and outcome analysis.

Compliance Framework Alignment: Explicit mapping to financial services regulatory requirements (BCBS 239, FCA guidance on AI governance, SEC model risk management standards). Audit trails feed into GRC workflows for regulatory reporting.

Where It Stops: OpenPages governs model risk. It has limited visibility into what sensitive data is flowing into models from outside its ecosystem. If a data scientist pulls a CUI file from an on-premises database and ingests it into a model, OpenPages logs the model's behavior, but not the data protection controls on that CUI file before ingestion.

Like Purview, OpenPages assumes sensitive data is already classified and tagged. It audits the classification. It doesn't enforce access controls on the files themselves.

Best For: Financial services organizations with existing IBM GRC infrastructure and model risk management as a primary governance driver.

OneTrust: The Privacy-First Play

What It Governs Well: OneTrust's Trust Intelligence Platform approaches AI governance from privacy and consent. Strong at mapping AI model usage to data subject rights (GDPR, CCPA). Tracks consent workflows for data used in training. Model assessment module flags privacy and fairness risks.

Compliance Framework Alignment: Explicit privacy framework alignment (GDPR, CCPA, LGPD). EU AI Act mapping includes high-risk AI system assessment workflows. Audit trails support privacy impact assessments and consent audits.

Where It Stops: OneTrust governs privacy risk and consent. It has limited functionality for protecting the actual files being used in AI training or inference. If a file containing personal data is used in a model, OneTrust logs that it was used and tracks consent. But OneTrust doesn't encrypt that file, enforce contextual access controls on it, or prevent unauthorized copies from being created during the AI ingestion process.

Best For: Privacy and compliance-heavy organizations where GDPR/CCPA mapping and consent workflows are primary governance drivers.

ServiceNow GRC: The IT Operations Play

What It Governs Well: ServiceNow's GRC platform is expanding into AI governance with policy management, workflow-based controls, and integration with IT operations. Audit trails feed into existing GRC processes. Strong at IT governance integration—if AI governance is sitting within a broader IT risk management program, ServiceNow connects all pieces.

Compliance Framework Alignment: NIST AI RMF mapping available through workflow templates. EU AI Act guidance templates in development. Audit trails support IT risk and compliance reporting.

Where It Stops: ServiceNow is a governance infrastructure, not a security infrastructure. It excels at defining policies and workflows. It doesn't protect the data that those workflows govern. If your policy says "CUI cannot be used in external AI models," ServiceNow enforces that policy through approval workflows. But if a user exports a CUI file to train a model anyway, ServiceNow's audit trail captures the violation—it doesn't prevent the file from being opened without proper access controls.

Best For: Large enterprises where AI governance needs to sit within broader IT risk management and compliance reporting infrastructure.

Theodosian: The Data Protection Layer

What It Governs Well: Theodosian operates in a different category from the other four platforms. It's not an AI governance tool; it's a file-level encryption and data-centric security platform. Where governance platforms audit what the model does, Theodosian protects the sensitive files that flow into and out of AI systems.

In practice: when employees upload sensitive documents to AI tools, whether that's a copilot, a summarization tool, or a custom agent, Theodosian applies per-file FIPS 140-3 validated encryption with contextual access controls. Access is governed by identity (who you are), device trust (is your device secure?), location, time of day, and role. If the required conditions aren't met, access is denied at the file layer. The user sees nothing, not even ciphertext.

Compliance Framework Alignment: Theodosian specifically addresses CMMC Level 2 requirements (SC.3.177 and SC.3.187) through file-layer encryption and access controls. Generates per-file audit logs that feed into compliance evidence packages. Partial alignment with NIST AI RMF (GOVERN and MAP phases) for data asset protection; does not address model transparency or fairness assessment.

Where It Stops: Theodosian doesn't govern model behavior, model inventory, bias detection, or AI transparency. It doesn't provide model risk assessment or output monitoring. Organizations that deploy Theodosian still need an AI governance platform (like those above) to audit how the model behaves and whether it's fair, accurate, and explainable. These two tools are complementary, not competitive; they solve different problems.

Best For: Security teams building an AI governance stack who recognize that protecting model behavior is only half the challenge. Organizations handling CUI under CMMC, ITAR-controlled designs, regulated healthcare data, or proprietary information that flows into AI systems need both model governance and file-layer data protection.

Pricing: Free 14-day pilot available. Enterprise pricing available on request.

Quick Comparison: What Each Platform Governs

The first four platforms excel at auditing what the model does. Theodosian protects the sensitive files before and after the model processes them. Most organizations deploying an AI governance platform will eventually ask: "What controls do we have on the sensitive files themselves?" That's where these tools diverge.

What AI Governance Platforms Do—And Don't—Cover

Here's what the first four governance platforms above will do for you:

- Audit what data was used to train the model ✓

- Flag when a model output contains sensitive information ✓

- Log who accessed the model ✓

- Map model behavior to compliance requirements ✓

Here's what they don't address:

- Prevent a CUI file from being copied before it enters the AI pipeline ✗

- Enforce contextual access controls on individual files before they're ingested ✗

- Ensure that when a user downloads an AI-generated output containing PII, that output remains encrypted and access-controlled outside the governance platform ✗

- Stop a file from being processed if the person accessing it fails a device trust check ✗

The AI governance category has solved the model governance problem. It operates on the assumption that sensitive data is already protected, or at least already classified. Most organizations find they need both: a governance platform that audits the model, and a data protection layer that controls access to the files before and after the model touches them.

For defense contractors handling ITAR and CUI, or regulated enterprises where document-level data protection is a compliance requirement, this is more than a nice-to-have. Your governance tool logs that a CUI file entered a model. Your data protection layer needs to have already enforced which devices can access it, and ensure that only authorized, device-trusted access is possible.

What You Should Do Now

Step 1: Choose an AI governance platform for auditing and managing model behavior. Pick the one that aligns with your ecosystem:

- Microsoft for Copilot-heavy organizations with strong Microsoft 365 adoption

- IBM for financial services with existing GRC infrastructure

- OneTrust for privacy-heavy organizations where GDPR and consent are primary drivers

- ServiceNow for IT-integrated governance, where AI governance needs to sit within broader risk programs

These platforms answer the question: What is the model doing?

Step 2: Ask your security team the data-layer question: "What controls do we have on the sensitive files entering these AI pipelines before the model ever sees them? Who can download outputs? Can they export them? Can they copy them?"

If the answer is "we rely on file permissions" or "we have a DLP alert for violations," you likely need a data protection layer. An AI governance platform logs what happened. A data protection layer prevents unauthorized access from happening in the first place.

Step 3: If data protection is a gap, evaluate a file-layer encryption solution like Theodosian. This addresses Layer 2: protecting the sensitive files themselves, not just governing the model that touches them.

🔒 Protect Your AI Pipelines From the Data Up

Most security stacks govern the model. Theodosian protects the data.

FAQs: AI Governance Tools

Do we need both an AI governance platform AND a data protection layer?

Yes. An AI governance platform audits the model's behavior and logs compliance-relevant decisions. A data protection layer prevents unauthorized access to sensitive files before and after they're processed. They solve different problems. You need both for complete coverage of ITAR, CUI, and other regulated data.

Will our existing DLP tools protect files in AI workflows?

Traditional DLP (Data Loss Prevention) tools monitor network traffic and endpoint activity to flag policy violations. They don't prevent the action; they alert after it happens. In AI workflows, by the time DLP detects that a file has been copied, the file has already been copied. A data protection layer like Theodosian prevents the copy from being created in the first place by encrypting the file and enforcing contextual access controls.

Does per-file encryption slow down AI ingestion?

No. Encryption happens at the file level during storage. When the file needs to be processed by an AI system, it's decrypted in a secure sandbox environment by an authorized process. The performance impact is negligible. Decryption happens once, during ingestion, not every time the file is accessed.

How does contextual access control work if I'm offline?

With Theodosian, if your device goes offline, access is denied until connectivity is restored, and controls can be re-evaluated.

If Theodosian uses zero-knowledge key management, how do we recover keys if the decentralized network has issues?

Theodosian operates on a principle of Sovereign Continuity. While we provide the platform for file-level encryption, we have architected the system so that your data is never "locked" behind our company's existence. For every file protected, a unique, non-identifiable FILE_SEED is generated. This seed is a mandatory ingredient used to regenerate that specific file’s encryption keys.

Theodosian possesses these seeds to facilitate seamless access, but the seeds themselves are not keys and cannot decrypt your data in isolation. To ensure long-term resilience, enterprises can maintain backups or joint custody of their FILE_SEED lists, ensuring they have the required ingredients to regenerate keys and access data even if Theodosian is unavailable.